The Value of Agile Development

At Acadis, we’re committed to enhancing our solutions through Agile development. It’s an approach whose name implies precisely the benefit it delivers: An opportunity to quickly define and validate our users’ requirements, engage our entire solution team to develop the highest priority features first, and achieve the targeted project milestone dates with the requisite – but no superfluous – functionality. Put another way, it means that we can deliver products that are better attuned to our clients’ continually evolving needs — and we can deliver them faster.

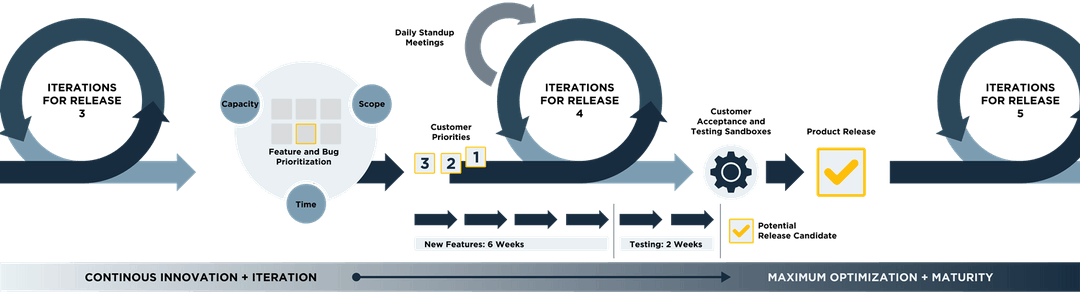

A critical foundation of Agile development is the quality control structure that’s naturally built into the process. Unlike traditional waterfall development (which is tied to a strict, linear, stage-by-stage approach), Agile stresses quality of product through continuous feedback loops and iterations. So we identify and document acceptance criteria for each feature, and then build automated tests into the software to ensure that those acceptance criteria are met prior to features being manually tested (as illustrated in Figure 1 below).

The result is a significant drop in imperfections in delivered software — and the ability to rapidly change functionality without jeopardizing existing capabilities. So our clients get uninterrupted access to the features they rely on most, all while being able to take advantage of a continuous stream of product improvements.

AGILE BENEFITS AND PRINCIPLES

SOFTWARE DEVELOPMENT PROCESS

Agile development goals:

- Define and validate user requirements quickly

- Develop features in sequence of highest priority

- Set and achieve project milestones to reach full system functionality

- Build quality control into the process

- Provide continuous feedback opportunities

- Document acceptance criteria for each feature

- Build automated tests into software

- Reduce defects in software developed

ITERATION DEMONSTRATIONS

At the beginning of each iteration, the team works with the customer to understand which of the small features (stories) are of the highest business value and prioritizes those stories at the top. The team works each of the stories to completion in priority order. By breaking down large modules/functions into more digestible and more easily evaluated features, the team will often be able to eliminate functionality that is thought in design to be important, but will never be used. At the end of each iteration, the team demonstrates the functionality that has been developed during the past iteration. The demonstration is expected to show that the defined acceptance criteria have been met and ideally results in the acceptance of the functionality.

Because iterations are short, it is rare for customers and development teams to get out of sync. If they are out of sync, only a small amount of rework is required to get back in sync. This eliminates the common problem of a software solution being delivered several months (or years) after the initial requirements gathering, and no longer meeting the business need either because the requirements were not well understood or they changed in the time it took to deliver the software.

Acadis uses a release train model, where the developers work on features for customers for 6 weeks and then spend the next 2 weeks (affectionately referred to as “cleaning the kitchen”) refactoring old code, adding integration tests or researching new technologies. During the time that the rest of the team is regression testing, final exploratory testing, deploying to production, communication to the user base and designing for the next release. If key stakeholders are participating in the iterations, the communication cost for a release is significantly reduced. Acadis works to minimize the cost of regression tests through the use of automated tests.

VELOCITY CHARTS

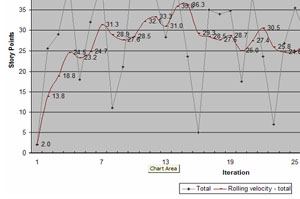

With each iteration, the team records which of the stories were accepted by the customer and how many story points that feature represented. The team uses a rolling average of the past six iterations to determine how many story points they can complete in an iteration. Because the team uses relative estimating in planning poker, the team is able to predict the rate at which they are completing story points and can project a delivery date on all features that have been estimated. The graphical representation of this information is called a burn down chart.

PAPER PROTOTYPING

In an effort to minimize the cost in developing software, the Acadis team employs paper prototyping with brand new design areas to identify what design will meet user needs. This process involves members of the Acadis team meeting with customer subject matter experts to understand the typical business functions that are performed. The team will create paper applications with individual pieces of paper representing each screen of the application. Users are then asked to participate in a usability study, where they “click” on buttons using their finger and “type” in fields writing on copies or transparencies. The development team observes the users and notes any areas where users have difficulties in performing the tasks. At the end of the usability session, the user is interviewed about missing functionality or additional feature needs. The team performs several usability sessions with different users and adjusts the paper prototype based on the feedback. This allows the team to adjust the application as many times as necessary and make very large changes to accommodate new functionality or usability issues without incurring a significant cost.

USER STORIES, PLANNING POKER AND POKER REVIEW

The Acadis team uses a method of relative estimating called “planning poker.” Each of the desired features is written as a user story with three elements: who wants the functionality, what action the user wants to perform and what business value the user realizes once the feature is implemented. The feature is then broken down into the smallest piece of usable functionality, so it is able to be completed in a single iteration and presents immediate business value. The Acadis team estimates the complexity of that feature in comparison with other features that have been developed in the past. Used in combination with Velocity Charts, the team is able to predict when features will be delivered to the customer. A map helps drivers get to their destination when the route is new. Poker review is the map to help the developer know the scope of the feature from a testing effort. The test plan is created before the developer starts the work. This cuts down on misunderstandings and leads to fewer detours. If the team is not able to come up with a clear test plan, the feature can be flagged as needing more design.

TEST-DRIVEN FEATURES

Test-driven features are the process of linking each requirement (user story) to specific, measurable and verifiable customer acceptance criteria. The requirement is documented in a testing application and tied to the code. If the code matches the stated requirements, the automated tests pass. This approach ensures that functionality does not pass from development to quality assurance until the specific criteria have been met. Test-driven features force the development team to meet and often exceed the functional and performance requirements of the application. In addition, the execution of the automated test suite occurs with each build, preventing unknown breakage of other areas of the application.

TEST-DRIVEN DEVELOPMENT

The practice of test-driven development (TDD) is a test-first approach. As opposed to the traditional approach of writing a complete module or function first and then performing tests once development is complete, TDD requires programmers to continually test the software from the outset. Tests are created for most or all of the individual functions of a module. Generally excluded from TDD is the presentation layer or user interface, as TDD does not lend itself to testing graphical elements and therefore, the cost-to-benefit ratio in evolving applications is not justifiable. Tests are initially designed to fail based on agreed-upon acceptance criteria. Coded functions are then created and continually tested and refined until they pass. Multiple tests may be used to validate that a large set of behaviors passes. Tests are retained and reused anytime the function is changed or as part of a larger test.

The benefits of using test-driven development practices with full regression testing include well-architected pieces of code that adhere to programming best practices, and a full set of regression tests that allow the application to be refactored at a rapid pace if business processes change.

Code coverage of new modules will be measured and is expected to exceed 95% using automated tools to capture and report results.

DESK CHECK

In software, the transition from development to quality assurance is often fraught with misunderstandings of what the desired feature should contain. The desk check is meant to solve that and the negative feed back loops that often come in repeated misunderstandings. When a developer believes they are finished with the feature, they call the designer and a quality assurance person to perform an in-person desk check. The quality assurance person will sit down at the developer’s machine to drive. They will walk through the test plan and do a quick quality check. The designer will look for proper use of patterns, usability and check that the feature matches the customer’s request. A punch list of any items that need to be corrected will be identified and documented by the designer. The team may identify areas that require more business analysis or input from the product management team or customers. Once the punch list is finished, the feature can progress to quality assurance.

CONTINUOUS INTEGRATION OF AUTOMATED BUILD

The Acadis team follows a continuous integration cycle that includes automated product builds. Each time a developer checks in modified source code, a battery of Acadis® automated regression tests will be automatically run to ensure that the code is clean and that it does not impact any another area of the application. The code is gated to not allow failing code to move to production.

RETROSPECTIVES

Sometimes referred to as a “lessons learned meeting” or“ post mortem,” the Acadis team performs a retrospective periodically at the end of iterations internally. At the end of each production deployment milestone, a retrospective meeting will be held with the customer and the Acadis team to improve the process for the future.

In retrospectives, the team asks the following questions:

- What did we do well, that we could benefit from doing again for future management?

- What did we learn?

- What should we do differently next time? and

- What still puzzles us?

Answering these questions allows the team to benefit from previous learning experiences.

TRADITIONAL QUALITY ASSURANCE PRACTICES

In addition to the specific quality measures that Agile provides, Acadis employs several traditional quality assurance practices.

EXPLORATORY TESTING

Once the customer is satisfied with the functionality demonstrated during the iteration demos (see above), the Acadis Quality Assurance team will run a series of functional testing scenarios to ensure the delivered feature:

- Integrates well with the rest of the Acadis® software

- Meets security requirements

- Meets or exceeds performance standards

- Conforms to UI standards

This process is generally shorter than in a traditional application cycle, because much of the functionality has been tested in the course of building and automated regression suites exist to verify that no problems were introduced through changes or the addition of new features.

DOCUMENTATION

For each milestone release, Acadis will provide appropriate build documentation. All defects identified in testing, error resolutions, and configuration changes will be recorded in the defect tracking system. Defect fixes will be ranked by impact and priority and separated from system enhancements.

DEFECT FIX PRIORITIZATION

The customer and Acadis team will be responsible for determining which defects should be fixed before user acceptance testing should occur. The numbers of defects are smaller than in traditional application development projects because much of the functionality never enters quality assurance until it meets the functional criteria.

CUSTOMER INVOLVEMENT AND SIGN-OFF

Customer involvement and customer satisfaction go hand-in-hand during projects. Early in the process, the customer and Acadis team will be involved in the prioritization of feature development and customer subject matter experts will be involved in the usability testing of designed features through paper prototyping. Because most of the testing is automated, the development team will be able to adjust the application and feature priorities based on this critical input. We have found that this process ensures the user community gets exactly what is needed and is actively engaged in evaluating trade-offs.

Throughout the process, the customer and the Acadis team will be responsible for reviewing the functionality delivered with each iteration and for ensuring that the demonstrated software meets the stated needs. At the end of each release cycle, Acadis will promote the software to the customer test servers for user acceptance testing (UAT) and final sign off.